Introduction

In our present digital age computers are at all times transmitting and storing large scales of info. From the act of sending e mails and streaming movies to online banking and cloud storage, data is in constant movement via networks. But during transfer or storage errors do happen out of electrical interference, hardware failure, weak network connections, or broken storage devices. Even a tiny error in a single bit of data can cause files to corrupt, communication to break down, or systems to operate incorrectly. That is why we see error detection techniques in computing is a very important element in today’s world.

Error correction methods put out to find issues in digital data which is before it is input into a system or accepted. Also they support integrity of data which is what is received is the same as what was sent or stored. In the absence of proper error detection we see digital communication and storage systems break down and become very vulnerable to damage.

In today’s computing environments we see great use of error detection techniques in computing which ensure accuracy, reliability and security. These techniques are put to use in networking, cloud computing, databases, operating systems, communication protocols, and storage technologies. By identifying errors at an early stage we are able to request retransmission of data, repair of corrupted info, or to prevent bad info from causing more issues.

This article looks at primary error detection methods which in IT include parity checks, checksums, cyclic redundancy checks (CRC) and hash functions. Also described is how these techniques work and the value they have in upholding digital trust.

Understanding Data Errors in Computing

Before we get into specific techniques, we should look at how data errors present in computing systems.

Digital info is put out in terms of binary which is also known as bits. We store and transmit this info via electric signals. As the info is being transmitted or stored noise may in fact change the bit value from 0 to 1 or from 1 to 0. That change is what we term as an error.

Errors out of many causes which include:

- Electrical noise in communication channels

- Weak wireless signals

- Hardware malfunctions

- Damaged storage media

- Power interruptions

- Software bugs

- Environmental interference

For instance when a file is sent over the net and out of the blue a different bit changes, at the receiver end you may have corrupted data. In financial networks, health databases, or banking which we use as systems such faults may cause great issues.

To avoid such issues which in fact cause trouble we have that computing systems apply special methods of error detection prior to the data being accepted for process.

The Importance of Error Detection

Error detection is essential in today’s digital world which has grown to rely on the accuracy and reliability of information. As users transact online, upload content or store documents in the cloud we must protect the integrity of that data.

The importance of error detection includes:

Maintaining Data Accuracy

Error correction which is what we put in place to see that data does not get altered during the transfer or storage. Precise data is of great importance to companies, governments, and individuals.

Improving Communication Reliability

Networks perform error detection to help put out defective data packets. When an error is found the system may request retransmission of the information to secure a dependably operated connection.

Protecting Sensitive Information

In areas like banking and health care a small error may put at risk important data. Error detection is a key to protect sensitive information.

Supporting System Stability

Corrupt data may cause software to crash or to act unpredictably. Identifying issues at the early stage helps to keep systems stable and efficient.

Enhancing User Trust

Users look for digital services that work well. Also reliable error detection increases confidence in online systems and digital platforms.

In that which they provide we see many error detection methods in computing systems put into hardware devices, operating systems, and communication protocols.

Parity Check Method

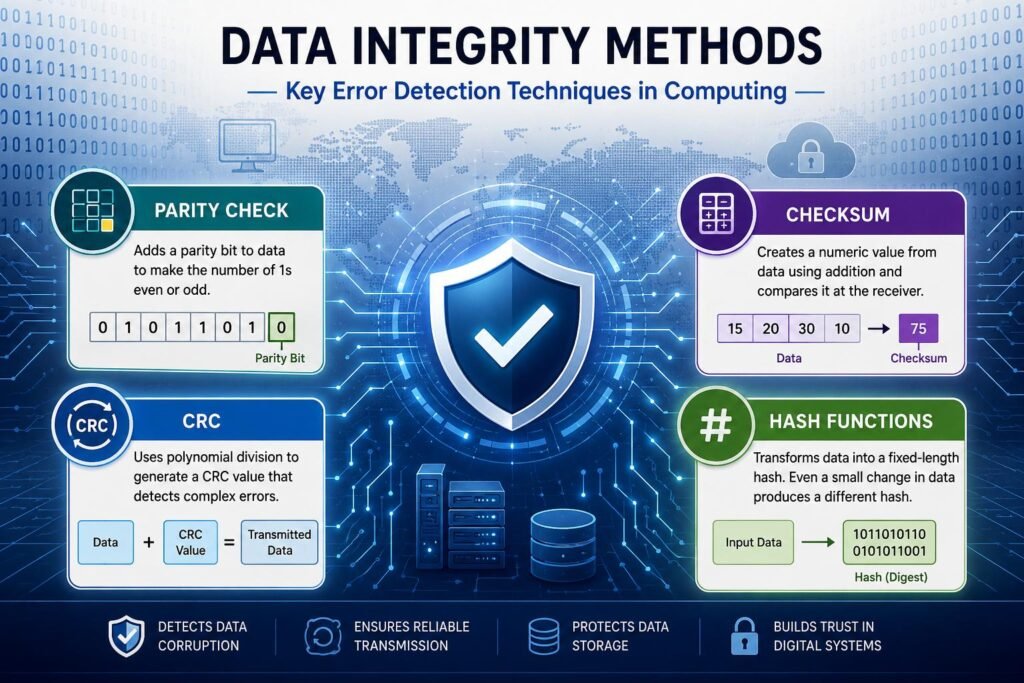

One of the basic and very old error detection methods is the parity check method. A parity bit is added to a set of binary data. It’s for that which the number of 1s in the data is to be determined as even or odd.

There are 2 primary types of parity:

- Even parity

- Odd parity

Even Parity

In even parity the total number of 1s in the data must be even. If we have an even number of 1s the parity bit is set to 0. If the number is odd the parity bit is set to 1.

For example:

Data: 0101110.

Number of 1s = 4 (even)

Parity bit = 0

Transmitted data: 10110010.

When a single bit changes during transmission the receiver notes that the parity rule is broken.

Odd Parity

In even parity the total number of 1s is made odd by adjustment of the parity bit.

Advantages of Parity Checks

- Simple to implement

- Requires minimal additional storage

- Fast processing speed

Limitations of Parity Checks

- Can only detect single-bit errors

- Cannot determine the exact cause of the error.

- May break when many bits change at the same time.

Although there are issues with it, parity checking is still used in memory systems and at low levels of communication for which it is simple.

Checksum Technique

Checksum is a very common method of error detection in computing. A checksum which is a determined value of a data block is produced before transfer or storage. The sender reports this and also the data. The receiver does the same calculation and then compares his result with the original checksum. If the values are the same then the data is valid. If they do not match an error is detected.

How Checksums Work

Let it be that a system which wishes to transmit numerical data:

15+20+30+10=75.

The value 75 becomes the checksum.

The receiver does the same calculation upon the receipt of the data. When the total is not 75 the system identifies that an error has occurred.

Uses of Checksums

Checksums are used in:

- File downloads

- Network communication

- Database systems

- Software installation packages

- Email transmission

Advantages of Checksums

- More effective than simple parity checks.

- Easy to calculate

- For large sets of data.

Limitations of Checksums

- Some issues may go by undetected.

- Not as good as more advanced methods like CRC.

Even in spite of these issues check sums still do well as they present a balance of performance and reliability.

Cyclic Redundancy Check (CRC)

In computing the term used for Cyclic Redundancy Check which is also very popular is that it is one of the most effective and wide spread error detection techniques. CRC which uses binary polynomials for the division process in error detection of transmitted data is very effective at identifying accidental data changes.

How CRC Works

Before sending, the sender does a special binary operation on the data which in turn produces a CRC value. This value is added to the data packet. When the receiver has the data it does the same calculation. If the calculated value is not the same as the received CRC and error is detected.

Applications of CRC

CRC is extensively used in:

- Ethernet networks

- Wi-Fi communication

- Hard drives

- USB devices

- Bluetooth technology

- Digital television systems

Advantages of CRC

- Detects many types of errors

- Highly reliable

- Efficient for high-speed communication

- Effective for burst errors

Limitations of CRC

- More complex than parity checks

- Requires additional computational resources

In spite of its complexity, CRC is a preferred solution in modern communication systems for its high accuracy.

Hash Functions for Error Detection

Hashing is also a key method for data integrity. A hash function transforms data into a fixed length value which we call a hash or digest. Also any change at all in the original data produces a different hash value.

How Hash Functions Work

When a file is born or is sent out the system creates a hash value. At the other end the receiver or the storage system creates a new hash from the data received and we compare it to the original. If the checksums are the same the data is considered to be the same.

Common Hash Algorithms

Popular hash algorithms include:

- MD5

- SHA-1

- SHA-256

Applications of Hash Functions

Hash functions are commonly used in:

- File verification

- Cyber security

- Password protection

- Blockchain technology

- Software distribution

Advantages of Hash Functions

- Highly sensitive to data changes

- Strong data verification capability

- Useful for security applications

Limitations of Hash Functions

- More computationally intensive

- Some legacy algorithms are at risk of attacks.

Today’s systems tend to use advanced hashing algorithms like SHA-256 which in turn provides greater security.

Error Detection in Data Storage Systems

Error correction is a wide field which extends beyond communication networks. Also see the role of these techniques in storage devices. Hard drives, SSDs, memory modules, and cloud storage systems use error checking to prevent data loss.

Error Detection in RAM

Computer memory reports errors which in turn may be a result of electrical disturbances or hardware faults. Also many systems use parity memory or ECC (Error Correcting Code) memory for the purpose of identifying and correcting these errors.

Error Detection in Hard Drives

Storage systems use checksums and CRC which check that files do not get corrupted over time.

Cloud Storage Reliability

Cloud providers have put in place in depth redundancy and verification which is applied across many servers. Without those protections users may lose their important files or have corrupted data.

Error Detection in Network Communication

Modern networks are very much dependent on smooth data transfer. As info passes through many devices and communication lines the risk of it getting corrupted is ever present. Protocols like TCP/IP which performs error detection at the time of transfer to secure accurate delivery.

Packet Verification

Data is broken up into packets which are sent over the network. Each packet includes check info like checksums and CRC values. If we detect an error the receiving system will ask for retransmission.

Wireless Communication Challenges

Wireless systems present bigger issues with interference which is a result of signals traveling through the air. We have error detection methods which enable very reliable communication in spite of environmental disturbances.

Internet Reliability

Network protocols in the Internet run all the time to check and validate data integrity.

Error Detection versus Error Correction

Although of a similar nature error detection and error correction are not the same.

Error Detection

Error identification determines if a problem exists in the data.

Error Correction

Error correction also steps up to identify and fix the issue.

For example:

- Parity checks mainly detect errors.

- ECC memory corrects some errors which it detects.

Some systems can get by with just detection which we use in the case of retransmission is an option. In other cases we see that correction is required as retransmission may not be practical.

Challenges in Error Detection

Although we have made great progress in this field error detection systems still have issues.

Increasing Data Volumes

In today’s world we see that which traditional systems handle large volumes of information at scale and as a result efficient detection has become a bigger challenge.

High-Speed Networks

As we see an increase in communication speed, detection mechanisms must do the same without sacrificing performance.

Sophisticated Data Corruption

Some complex errors go by methods which we use to catch them.

Security Threats

Cyber criminals will purposefully tamper with data which in turn calls for better integrity verification. Researchers report to be still working on it which is the development of better and more efficient techniques.

Future Trends in Error Detection

In the years to come computing will see great use of error detection systems.

Artificial Intelligence Integration

AI tools may see out of the ordinary patterns and predict system failure.

Quantum Computing Challenges

Quantum systems present a new type of data processing which requires special integrity verification.

Advanced Cloud Protection

Cloud providers are introducing more robust redundancy and verification systems for large scale user data.

Improved Cybersecurity

Future systems will integrate error detection with advanced security protocols for the prevention of accidental as well as intentional attacks. As we see in the growth of digital systems’ complexity, there is an increased importance of accurate and reliable data handling.

Conclusion

error detection techniques in computing are at the base of the reliability and stability of present day computing systems. In the range of activities which include surfing the web to putting data in the cloud, we see that accurate data transfer and storage is a must. If we do not have adequate detection measures put in place for faulty information we can see that which will lead to communication breakdowns, software failures, financial loss, and security issues.

In today’s world error detection techniques in computing like parity checks, checksums, cyclic redundancy checks and hash functions play a key role in the protection of data integrity. Some of these methods are very basic in nature, others which we use in modern communication networks and storage systems are very advanced.

As we see an increase in our dependency on digital technology what we are also seeing is a greater need for reliable data management. As computers continue to advance, the role of error detection in computing systems will only grow in importance for its part in creating a trustable, accurate and efficient digital world.

Get more well researched information about Error Detection Techniques in Computing here.