Introduction

In our ever more connected world which is what we live in today the fundamentals of data transmission play a role in how info flows between devices, systems, and networks. We may be sending out a text, streaming a film, or doing online finance, in all of these data has to go very fast, very accurately, and very securely. That which this process does is a key element in what one studies in computing, networking or information systems.

Data transfer is a term we use for the exchange of digital or analog information between two or more devices via a communication channel. Although the concept is simple in theory, the process is made up of many key elements which include transmission speed, data integrity, and error detection. These components work to see that the data arrives at its destination quickly and in perfect condition.

This article looks at the basic principles of data transmission which includes how speed is measured, what measures are put in place to maintain accuracy, and which systems are in use to detect and correct errors in today’s communication systems.

What Is Data Transmission?

Data transfer which is the action of a device (the source) to another (the destination) via a communication channel which may be a cable, air waves, or fiber. This process is basic to what we see in all digital communication which ranges from our local area networks to the global internet.

There are 2 primary types of data transmission:

- Analog transmission: Uses which are present and constant for that data.

- Digital transmission: In modern systems what is more common is to use discrete signals (binary 0s and 1s).

Transmission also may be classified by direction:

- Simplex: Single way communication as in radio broadcast.

- Half-duplex: Two way communication at different times (eg walkie talkies).

- Full-duplex: Two way real time exchange (like phone conversations).

Understanding of these basic categories is the base which we build up to more complex ideas of speed and reliability.

Transmission Speed: What speed at which data travels.

One out of the top elements in data transmission is speed. Speed of transmission determines how fast info gets from point to point which in turn affects user experience and system performance.

Measuring Transmission Speed

Transmission rate is measured in terms of bits per second (bps). Also we see units of:.

- Kilobits per second (Kbps)

- Megabits per second (Mbps)

- Gigabits per second (Gbps)

For instance a network speed of 100 Mbps is to say that 100 million bits of data can be sent out each second.

Factors Affecting Transmission Speed

Several variables determine the speed of data transfer:

1. Bandwidth Frequency

Bandwidth is the maximum rate at which a network can transfer data at any given time. Also, the higher the bandwidth the more data can be sent out at the same time.

2. Latency Delay

Latency is the time between when a data packet is sent out and when the response is received. Also with high bandwidth, latency still can slow down communication.

3. Communication Channel

The type of medium is used (for example copper cables, fiber optics, or wireless signals) does play a role in speed. It is also known that fiber optic cables have the fastest transfer rates.

4. Interference of Signals

External issues such as electromagnetic interference which can diminish signal quality and reduce speed.

5. Network Congestion

When large numbers of users are on the same network transmission speeds may drop.

Data Integrity: Improving accuracy.

While speed is a factor, what we put out has to be accurate. Data integrity which is the accuracy and consistency of data as it travels through systems is very important. If data is changed, lost, or corrupted that is when we have problems which in some fields like finance and health care may be a disaster.

Causes of Data Errors

Data can become corrupted due to: Data may become corrupted by:

- Electrical interference

- Weak signals

- Hardware malfunctions

- Software bugs

- Network congestion

Due to these risks which are what this is all about we must have in place data integrity measures.

Importance of Data Integrity

Maintaining data integrity ensures that:

- Information that is received exactly as it was sent.

- Systems function reliably

- Users will find what they report to be true.

Without appropriate protections high speed transfer is in vain if the data is not accurate.

Error Detection Methods

To at large in the field of reliable communication we see that which is achieved through the use of error detection techniques by systems to identify if data has been corrupted during transfer. While these methods do not in fact correct the errors they do bring to light their presence which in turn allows for corrective action.

In the study of the fundamentals of data transmission which is what we are doing here, it is very important to study error detection which in turn guarantees that the data we put out at the end is as accurate as we require it to be even as we send it through very complex networks.

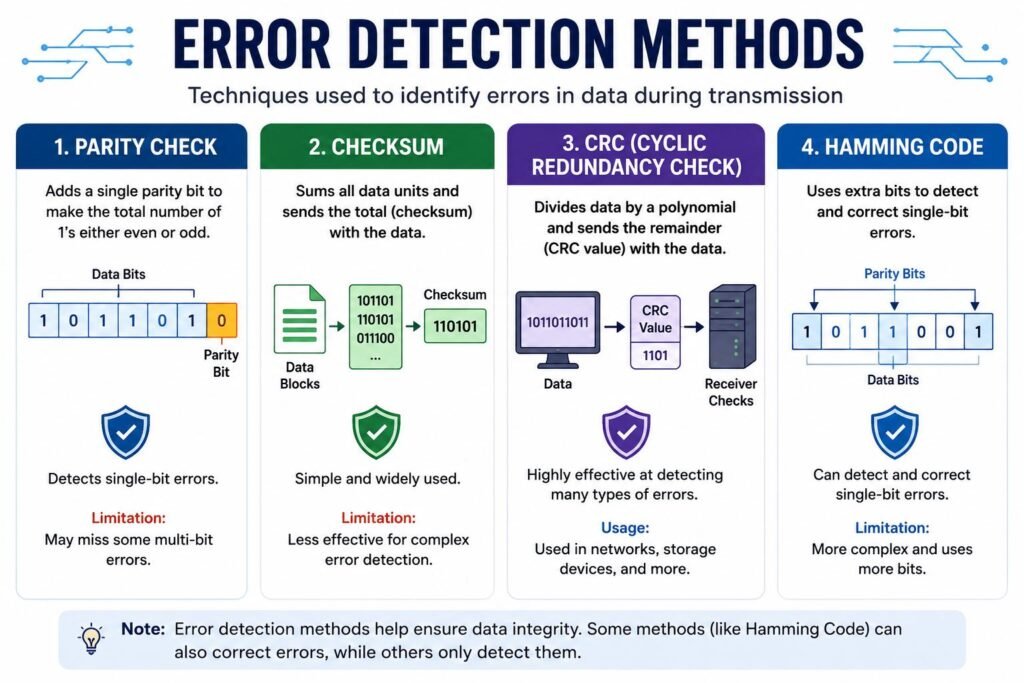

1. Check for Parity.

Error detection via parity is the simplest method.

A parity bit is included in a set of bits which makes the total number of 1’s even (even parity) or odd (odd parity).

The receiver determines if the parity is correct.

Limitation:

It can only identify single bit errors which at times may fail in multi bit error.

2. Checksum.

Checksum is which we total up the data elements and also put that total with the data.

The receiver does the same calculation.

If different results present themselves then we have an error.

Advantage: Simple and widely used.

Limitation: Less so for complex error detection.

3. CRC (Cyclic Redundancy Check)

CRC is a better and more reliable error detection method.

- Data is split according to a defined polynomial.

- The CRC value is sent with the data.

- The receiver does the same calculation to check.

Advantage: Very good at identifying many error types.

Usage: Present in network communication and storage devices.

4. Coding by Hamming

Hamming Code which also identifies errors at the same time is able to correct them.

- Additional places are used for data.

- These go to the root of the error’s location.

Advantage: Supports both error detection and correction.

Limitation: More complex than other methods.

Error Control Techniques

Detecting errors is a start but we also have to deal with them effectively. That is what error control techniques do.

Automatic Repeat Request (ARQ)

ARQ is a technique in which the receiver requests retransmission at the time of error detection.

Types include:

- Stop-and-Wait ARQ: Sender is to wait for acknowledgment first before sending out the next data.

- Go-Back-N ARQ: Sender resends multiple frames when errors occur.

- Selective Repeat ARQ: Only what is in error is retransmitted.

Forward Character Error Correction (FEC)

FEC allows the receiver to fix errors without asking for retransmission.

- During transfer of data.

- The receiver corrects these errors.

Advantage: Reduces delays caused by retransmission.

Usage: Common in streaming and satellite communications.

Transmission Modes

Data is transmitted in many ways which depend on how bits are sent.

Recurrent Transmission

- Data is sent out one at a time.

- Less fast but more reliable over long distances.

Transverse Transmission

- Multiple bits are sent simultaneously.

- Over faster speeds but which break down at great distances.

Communication Channels

The term for the path that data takes is the communication channel.

Wired in to the system

- Twisted pair cables

- Coaxial cables

- Fiber optic cables

Advantages:

- High reliability

- Less interference (especially fiber optics)

Wireless Communications Channels

- Radio waves

- Microwaves

- Infrared signals

Advantages:

- Mobility and flexibility

Challenges:

- Signal interference

- Security concerns

Protocols in Data Transmission

Protocols are the sets of rules which govern data transmission.

Examples include:

- Transmission Control Protocol (TCP): Ensuring dependable transfer with error correction.

- Internet Protocol (IP): Tackles handling and routing.

- User Datagram Protocol (UDP): Quicker but less reliable than TCP.

- Protocols make it possible for devices from different manufacturers to communicate.

Real-World Applications

Understanding the fundamentals of data transmission is a practical affair it plays out in our day to day life:.

- Internet browsing: Data streams which load web pages.

- Video streaming: Requires fast performance and error correction.

- Online banking: Seeks out high security and integrity.

- Cloud computing: Depends on smooth data flow between servers and users.

Challenges in Data Transmission

Despite advancements, several challenges remain:

- Noise and Interference

- Bandwidth Limitations

- Security Threats

- Time Delay Issues.

Engineers are always at work on developing new tech which in turn improves transmission efficiency.

Future Trends

The future of data transmission is to see the impact of such technologies as:

- 5G and beyond

- Fiber optic expansion

- Satellite internet systems

- Quantum communication

These innovations bring to the table greater speed, lower latency, and high reliability.

Conclusion

The fundamentals of data transmission is the building block of the base of what modern communication systems are constructed. Each component is crucial to the efficiency and reliability of the path system, from its actions to its role in error detection and correction.

The rate at which info is passed along is called the transmission rate and the assurance that the info passed is accurate is called data integrity. We have error detection and correction which is added as protection measures to detect and correct errors that may occur during transfer.

With the modern advancement of technology, there is an increasing demand for data transfer at a quicker rate and more reliable. Learning these basic principles, it may or may not be technical could inspire greater interest in the systems that drive our digital society, and how to make them better.

Get more well researched information about the fundamentals of data transmission here.