The digital world of 2026 couldn’t be more different from the siloed and hardware-based world of the early aughts.Cloud-based networking is at the core of this transformation, as software has been separated from its physical boundaries. During the traditional era, deployment of a new application was a Herculean undertaking, with the need to acquire physical servers, statically configure switches, and allocate IP addresses. Now, that complexity is encapsulated in software-defined layers, so orchestration enables developers to manage global infrastructures with a few lines of code. This shift is not just about the hosting service; it’s about a complete reshaping of the way data flows, applications scale, and business remains resilient in a constantly changing digital world.

Cloud-based networking has created the democratization of high-performance computing, allowing startups to access high-performance infrastructure that can rival large companies around the world. Organizations have changed from a “Capex” (Capital Expenditure) to an “Opex” (Operating Expenditure) model, paying only for bandwidth and resources as needed. The financial agility is reflected in technical flexibility. Modern applications are no longer a single application, in a single datacenter, but distributed, modular, and elastic. In this course, we’ll dive into the essential concepts that enable it: virtualization, extreme scalability, and the complex orchestration of distributed systems.

The Foundation of Modernity: Virtualization and Software-Defined Everything

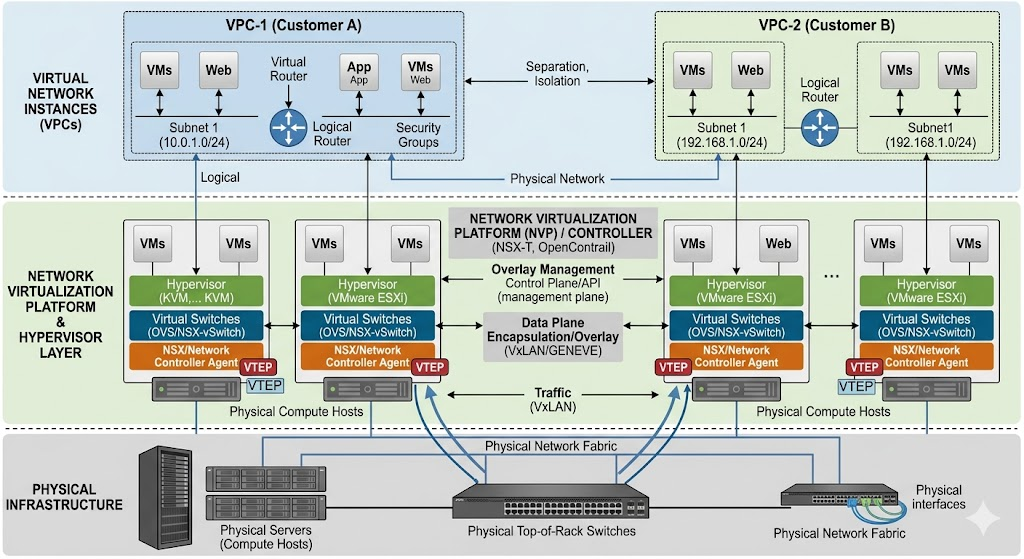

Virtualization is the basis of cloud networking, which is the concept that multiple independent virtual networks operate on a single physical infrastructure. In the legacy world, a network was simply made up of cables and hardware ports. Whereas, if you wished to secure a portion of your traffic, you would frequently have to use physically separate hardware. Cloud networking takes the place of physical obstacles with logical ones. Hypervisors and Network Virtualization Platforms enable pooling and partitioning of physical resources into Virtual Private Clouds (VPCs). This abstraction enables the development of complex network arrangements, including subnets, routing tables, and gateways, all within software, granting IT operations unprecedented agility.

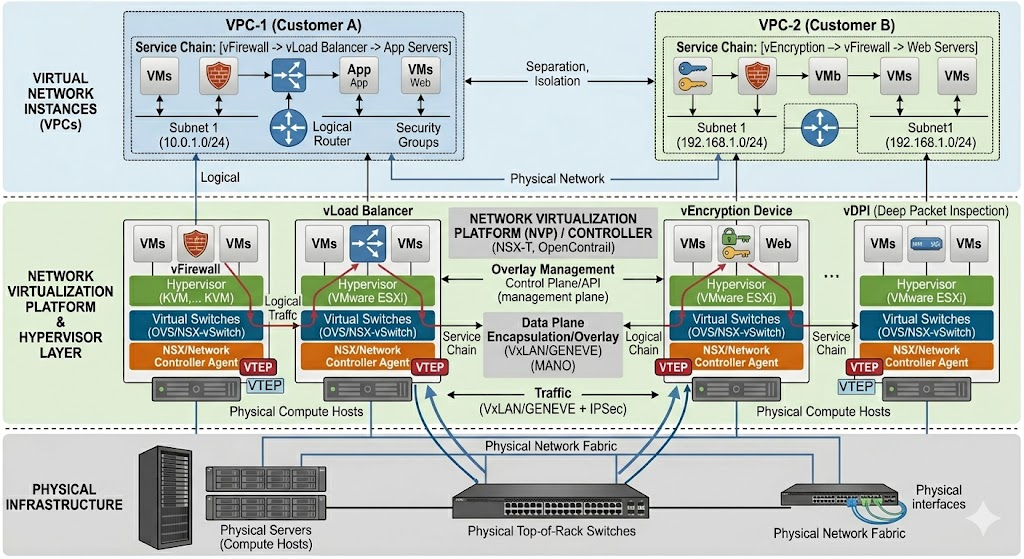

Virtualization has not only added the concept of resource partitioning, but it has also added the concept of “Network Function Virtualization” (NFV). Networking appliances such as firewalls, load balancers, and encryption devices have been traditionally expensive, dedicated hardware devices. Cloud-native services are virtualized services that can be rapidly created and destroyed. This also allows a programmer to add a load balancer to the system at a busy time and remove it when the traffic dies down. This “software-defined” approach removes the speed restrictions of provisioning hardware and makes the network run as fast as the application code.

Moreover, virtualization offers hardware independence – an essential feature for disaster recovery and business continuity. The full network design is defined as data (Infrastructure as Code), so it can be duplicated to another region in minutes. The virtualized network and applications can be re-instantiated in a region in Europe or Asia with minimal downtime if there is an outage at the physical data center in North America. This is because this level of resilience is directly attributed to the separation of the logical network from the physical wire. For modern businesses, the network is no longer a static tool but a way to dynamically heal, grow, and evolve with the application.

Scalability and Elasticity: Fulfilling the Needs of a Global Audience

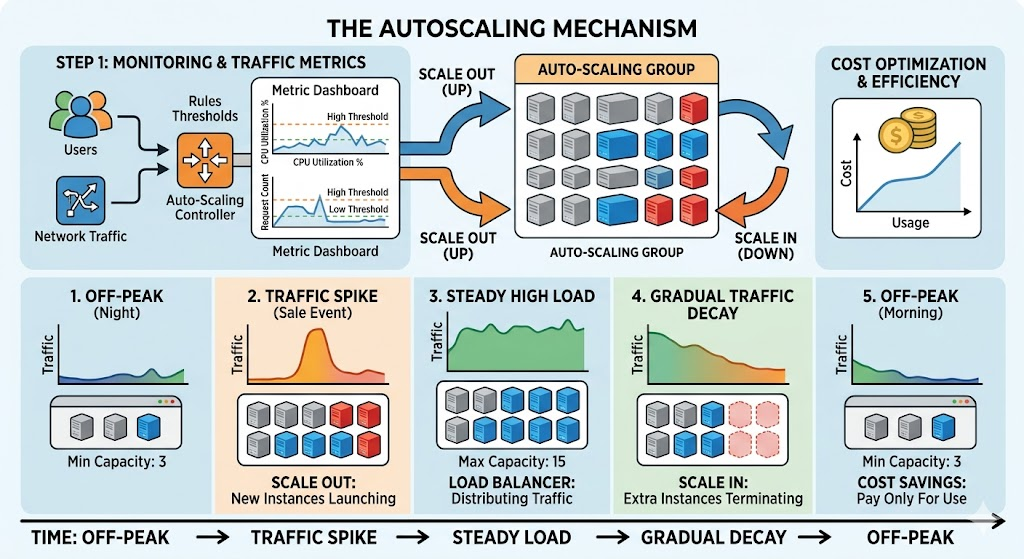

Virtualization is the base and scalability is the skyscraper. The power of cloud networking is one of its biggest effects on today’s applications: it can manage unpredictable workloads. Historically, engineers had to “over-provision” their network, purchasing additional hardware to support a maximum amount of traffic that could only occur once a year, for example Black Friday at a retailer. This meant that there was a huge amount of expensive hardware available and not being used for 95% of the time. With cloud networking, there is also a concept of “elasticity” which allows the system to automatically adjust the amount of resources it uses as a function of how many users are on the system at a given moment.

This scalability can be implemented using complex load balancing algorithms and auto-scaling groups. If a sudden demand for application instances appears, the cloud network will sense the higher latency or CPU consumption, and automatically direct the traffic to the newly created application instances. Seamless to the end-user, with access being the same for ten users as it is for a hundred million. This ability has revolutionized application design, moving from the premise of “application-centric” development to building applications from a “cloud-native” perspective of having no constraint on the number of nodes to which the application can be deployed without maintaining state and consistency.

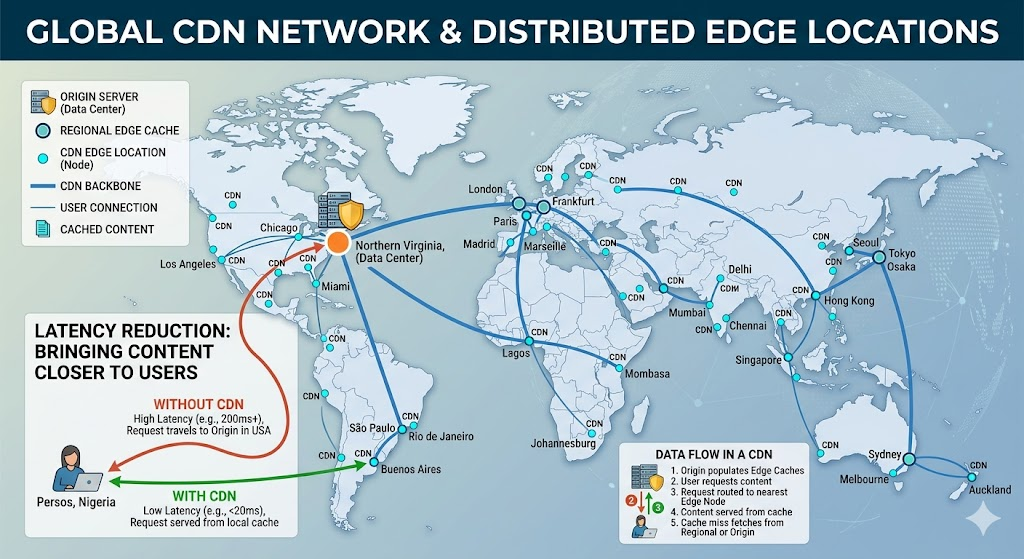

But scalability in cloud networking is not simply about increasing compute resources; it’s also about optimizing data delivery around the world. Content Delivery Networks (CDNs) and Edge Computing are different forms of the cloud network that provide more proximity to the user. Cloud networks store content at the ‘edge’—places in the world that are physically close to the end user—and this decreases latency. It is essential for real-time applications such as high-frequency trading platforms, online gaming, and autonomous vehicles, where milliseconds matter. Scalability to the ‘Edge’ of the internet may be the most impactful part of the modern cloud world.

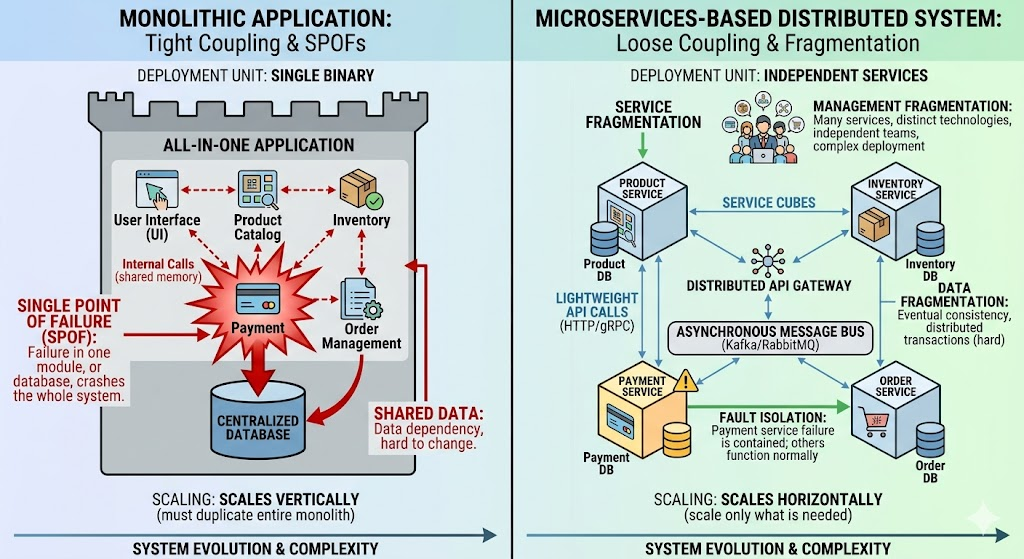

The Death of the Monolith – Distributed Systems

With the advent of cloud networking, it’s time to say goodbye to the monolithic application architecture. In a monolith, everything is packaged in one program that runs on one server, including the database, user interface, and business logic. This gives rise to what is known as a “single point of failure,” meaning that if one piece fails, the whole system fails. Cloud networking has helped shift to microservices and distributed systems, which fragment the application into smaller, separate services that communicate across the network. This distributed system provides greater reliability as a failure of one service (such as a “recommendations” engine) does not necessarily render the platform unusable.

These distributed systems need an extremely intelligent network layer to manage them. Services are spread across various virtual machines and containers, and even across different cloud providers, so the network needs to support service discovery, traffic routing, and security among the various parts. As a result, there have appeared “Service Meshes”—specialized infrastructure layers that hold the services responsible for service-to-service communication. A service mesh gives you deep visibility into the data flow between microservices and enables engineers to identify bottlenecks and secure data flow with automated encryption. In this setting, the network is the “glue” that puts the distributed application together and makes hundreds of moving parts appear as one cohesive application.

The consequence of this to data access in distributed systems is also significant. Traditional databases tended to be centralized, which was a problem for global applications. Distributed databases are data that’s replicated in multiple regions, and supported by cloud-based networking. This can mean that if a user is using it in Tokyo, and another user in London, both can have access to the same data with minimal time delay. This creates an issue of ‘data consistency’ – the need to ensure that all nodes are aware of the latest version of the truth – but modern cloud protocols are able to deal with these complexities. The outcome is a data fabric that enables the access, process, and synchronization of data across the globe at a scale that was once theoretically unachievable 20 years ago.

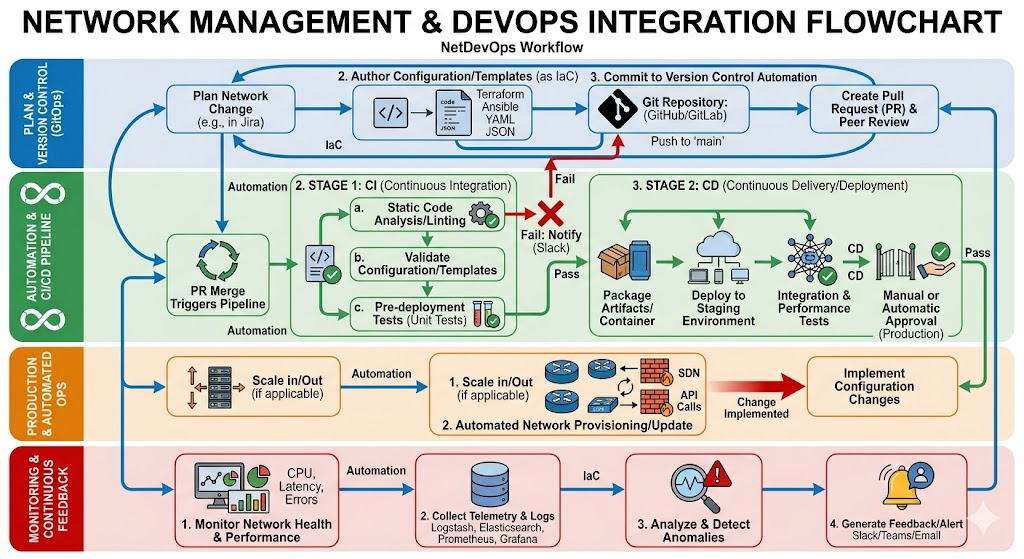

The Evolution of IT Operations and the Emergence of NetDevOps

Cloud networking has not only transformed the “what” of technology, but the “how” of how IT works. The present day is characterized by the emergence of a new discipline called NetDevOps, which applies DevOps principles of software development to network management, including version control, automation, and continuous integration. In today’s IT world, network engineers need to give up their serial cables for YAML files and Python scripts. The network becomes code and can be deployed with entire environments, minimizing human error and speeding up the deployment of new features and applications, also known as the “time-to-market.”

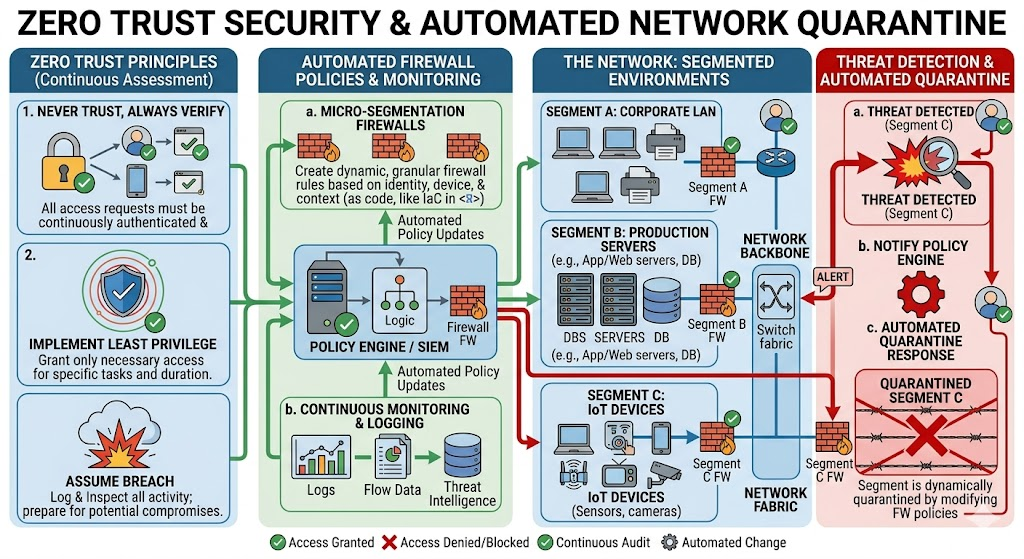

Such automation is crucial for ensuring security in today’s digital landscape teeming with threats. With a traditional network, it might take weeks to find out that one firewall rule is misconfigured. A cloud-defined network is a network in which security policies are embedded within the infrastructure. Network configurations can be scanned for vulnerabilities before deployment through the use of automated tools. In case a breach is identified, the cloud network can automatically “quarantine” the compromised segment, stopping lateral cloud movement of attackers. In an era of rapid cyberattacks, this proactive and automated security strategy is essential in 2026, as human operators cannot handle the volume or speed of attacks.

Furthermore, cloud networking tools have revolutionised the way teams troubleshoot and optimize performance. Real-time telemetry data provides the operations team with a clear view of the traffic flow conditions in the system, and enables them to identify “hot spots” or failing components before they affect the user experience. This “observability” is very different from the “old school” way of monitoring reactively. In today’s IT landscape, AI-powered analytics can anticipate network issues and reroute traffic as necessary, keeping the application “always-on.” A major paradigm change has occurred in the IT profession from the days of “keeping the lights on” to orchestrating a symphony of complex and automated systems.

Challenges & Considerations in a Cloud-First World

However, it’s not without its challenges as organizations move to cloud-based networking. The biggest issue is the term ‘vendor lock-in.’ If an organization uses all the cloud provider’s custom-built tools, it will be a huge and expensive effort to switch providers. This has been driving a push towards ‘multi-cloud’ and ‘hybrid-cloud’ approaches involving customers using multiple clouds and on-premise data centers to retain leverage and flexibility. Managing a network across multiple cloud types, however, adds complexity, and the tools that can do this need to offer a “single pane of glass” view across the cloud types.

Security is also ever-changing. Cloud providers do have very strong security options, but the Shared Responsibility Model requires that the customer must configure their virtual network. It’s possible to have an S3 bucket exposed or an oversight in a security group setting that can cause catastrophic data leakage. The more spread out applications get, the greater is the “attack surface”, and the more places there are where the bad guys can get in. This requires a “Zero Trust” architecture, which means there must be a complete verification and encryption process for each data access request, regardless of whether it is from within or outside the network. The network is not a “perimeter” that can be defended; it is a network of interactions that needs to be constantly monitored.

Last but not least, networking with the cloud is not necessarily cheaper. The pay-as-you-go system is efficient, but costs can add up rapidly with “data egress” charges that are incurred when data moves out of the cloud provider’s network. To ensure cost-effectiveness, organizations need to adopt robust “FinOps” (Financial Operations) methodologies to keep track of cloud expenses and fine-tune their network designs. This frequently entails balancing multiple tradeoffs including performance, redundancy, and budget constraints. Today’s network architect needs to be as much a financial strategist as he is a technical expert in order to meet the limitless potential of the cloud with the finite budget of a company.

Conclusion: The Connected Enterprise’s Future

So, as we head into the end of this decade, the direction of cloud networking is likely to continue: more abstraction, more intelligence, and complete integration. The network is almost entirely invisible to the developer, and this is how everything will be “serverless”: connectivity, security, and scaling will be managed by the network itself. Combining 5G, Artificial Intelligence, and cloud infrastructure will enable a future in which data is available 24/7 and everywhere, supporting the next generation of augmented reality, autonomous systems, and global collaboration tools. The network has really become the computer; it’s the connective tissue for a digital world that never sleeps.

In today’s business landscape, the message is clear: change or perish. Moving to a network based on cloud computing is no longer an option for the technologically advanced – it’s a requirement for survival. With virtualization, the distributed system, and the skill of elastic scaling, organizations can create applications that are faster, reliable, and more resilient to the uncertainty of the future. In today’s digital age, application deployment has been transformed, and we are living through an unprecedented era of digital innovation, driven by the invisible, powerful, and infinitely scalable networks of the cloud. The infrastructure is there, all that is needed are our imaginations.