Abstract

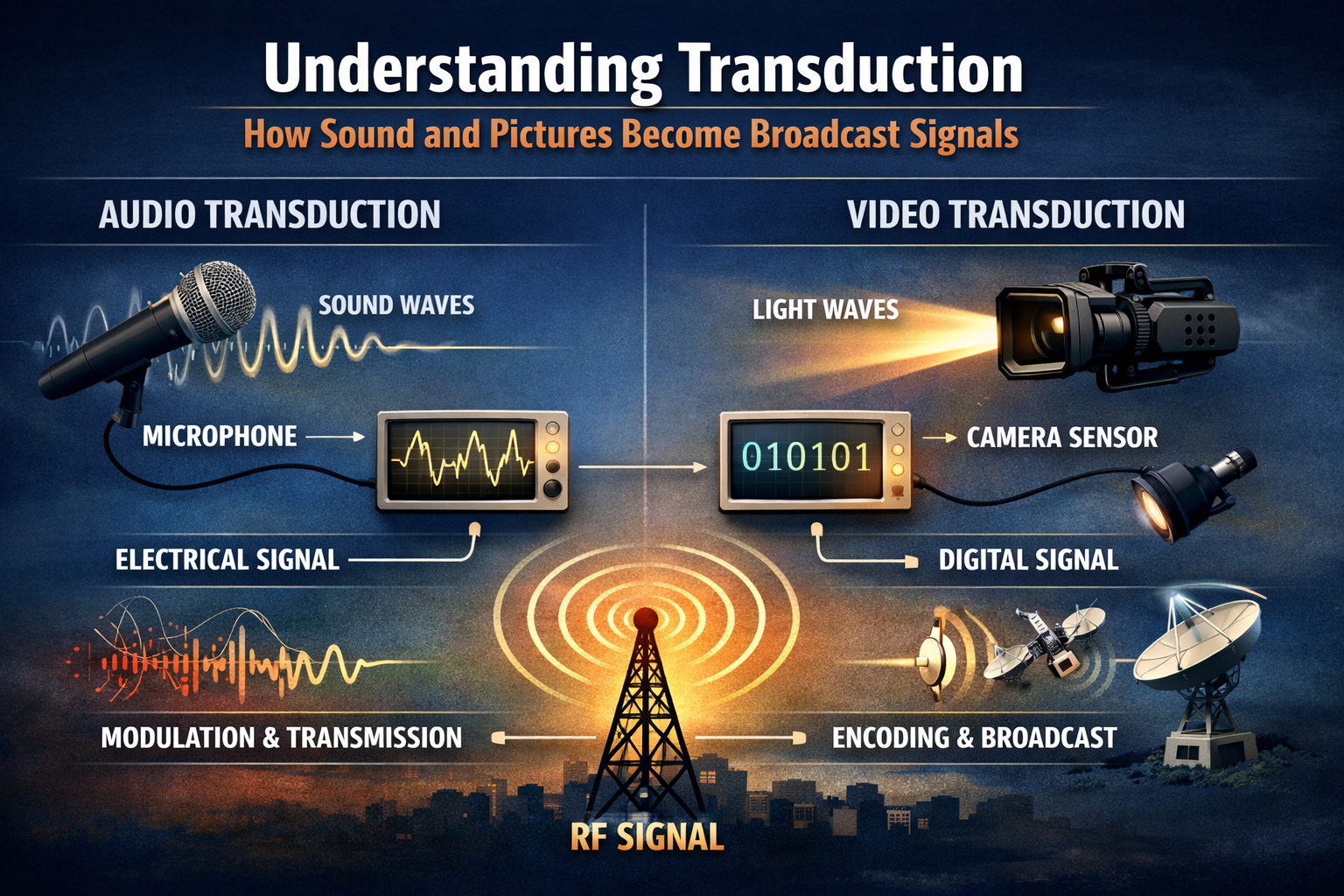

One of the fundamental scientific procedures that allows capturing, converting, and broadcasting of both sound and visual media is transduction. The paper discusses the process of converting physical stimuli into electrical impulses with the help of such tools as microphones, cameras, and sensors. Based on physics, engineering and communications literature, the research combines the conceptual frameworks and empirical research to describe the process of energy conversion, signal conditioning and broadcast transmission. This paper presents some of the major theories based on transducers, how to capture and process the signal, and the implications on the quality of broadcasts and system design. The results reflect the issue of noise, latency, and bandwidth constraints in practice. This work seeks to bridge the gap between a primer physics course and broadcasting applications with a clear presentation of transduction to the communication student.

Keywords: scientific Transduction, signal conversion, broadcast technology, microphone transducers, camera sensors, electromagnetic transmission.

1.0 Introduction

Contemporary broadcasting requires the smooth conversion of physical phenomena, sound and light into the electrical signals that can be transported via various means, received, and deciphered by viewers. The central concept of this process is called transduction, and it applies both to physics and electrical engineering and to media technology (Smith, 2018). Transduction is a poorly comprehended matter in communication education, where usually content production is considered a priority, rather than signal science.

The article provides an overview in a technical but easy-to-understand way of the operation of transducers in broadcast systems. The first one is the literature review of the underlying science and earlier technological studies. It then provides a conceptual and theoretical overview of transduction mechanisms. Findings and discussion that relate theory and practice are discussed under the methodology section.

2.0 Literature Review

The study of transduction cuts across various fields of study. Pressure-to-voltage conversion principles have been well studied in acoustics, whereby it has been found out how various microphone designs influence signal fidelity (Rossing & Fletcher, 2004). Semiconductor sensor technologies have been created in optics and imaging in order to translate light to electrical charges in an efficient manner (Janesick, 2001). Signal processing pipelines, which condition and prepare electrical signals to be modulated and transmitted, have been documented by broadcasters and engineers (Pizzi, 2015).

Although the engineering literature is strong, not much is written about these processes in communication studies that can be explained to the media students. One of the exceptions is Zhao and Wang (2019), who associate sensor physics with audiovisual quality in digital broadcasting. There are still, however, holes in the integration of the foundational science and the real-world broadcast architecture, and issues of noise, latency, and bandwidth limitations.

3.0 Conceptual Review

3.1 What is Transduction?

The scientific process of transduction is converting one form of energy to another. It is the process of converting the physical stimuli, sound pressure waves or the photons of the light, into electrical signals in broadcast media (Hecht, 2016). Electrical signals may then be amplified, conditioned, digitized modulated and transmitted via electromagnetic carriers.

Signal transduction in biological systems is defined as cellular processes in response to stimuli that cause biochemical responses (Alberts et al., 2015). Although the physical processes are not similar, the general pattern of energy conversion connects both the engineering and biological platforms. This paper uses the engineering definition of transduction to describe the broadcast technology.

3.2 Sound as a Pressure Wave

Sound is the mechanical variation of pressure that travels through a substance, say air (Kinsler et al., 1999). Human hearing detects these changes of pressure, and transducers, including microphones.

3.3 Light as Electromagnetic Radiation

Light is a subset of the electromagnetic spectrum, which is made up of vibrating electric and magnetic fields (Tipler and Mosca, 2007). Broadcast cameras observe visible light and change it into electric forms of brightness and color.

4.0 Theoretical Framework

In this section, the chapter discusses the scientific theories that describe the practical application of transduction.

4.1 Induction of electromagnetism and the moving microphone

Dynamic microphones are based on the Law of electromagnetic induction created by Faraday: a moving conductor in a magnetic field generates a voltage being proportional to the speed of change in magnetic flux (Griffiths, 2017). Sound waves bend the diaphragm of a coil on a microphone in dynamism. The coil is rotated in the magnetic field, and this results in an electrical current which reflects the waveform of sound.

4.2 Capacitance and Condenser Microphones Variation

Condenser microphones are based on the variation in capacitance between two plates (diaphragm and backplate). The capacitance of the sound waves varies as the plate separation varies, and an electrical signal is produced (Everest and Pohlmann, 2015).

4.3 Photoelectric Effect and Sensors in the Camera

Photoelectric principles regulate light transduction in cameras through which incident photons release electrons in semiconductor material (Wolf and Tauber, 2000). In other sensors, such as CMOS and CCD, photons hit pixel sensors, and charge in the form of free electrons is accumulated to a voltage, which is digitized based on the strength of light.

These theories give a foundation for the use of physical stimuli to produce useful electronic information in broadcasts.

5.0 Methodology

The type of theoretical analysis and synthesis applied in this article is a theoretical methodology. It combines scientific concepts of physics and engineering with broadcast technology standards in order to model the transduction process. Peer-reviewed engineering textbooks, journal articles on sensors and signal processing, and documentation on broadcast technology standards are all sources.

No empirical information was gathered, but the research examines the available theory and documented findings to develop an explanatory model that can be applicable to communication students.

6.0 Findings

6.1 The Processes of Sound Transduction

There are several types of microphones which use different physical principles to generate electrical signals:

- Dynamic Microphones: Electromagnetic induction provides strong signals and long-lasting hardware, which can be used in live broadcasts and sound pressures (Griffiths, 2017).

- Condenser Microphones: Capacitance change allows high-quality recording, which is common in studio broadcasting (Everest & Pohlmann, 2015).

- Ribbon Microphones: Magnetic fields are used with thin metallic ribbons in order to create smooth and natural frequency responses (Baker, 2010).

6.2 The Photochemical Process of Light Transduction

The sensors of camera imaging convert light into digital images:

- CCD Sensors: Charge-coupled devices transfer charge taken by the sensor across the chip to be read, which provides high image quality but at the cost of increased power consumption (Janesick, 2001).

- CMOS Sensors: Local amplification is accomplished on a pixel-by-pixel basis, and so readout is rapid, and low power consumption is achieved, thus application in contemporary broadcast cameras (Holst & Lomheim, 2011).

The color capture consists of filter arrays (e.g., Bayer pattern) that allow capturing the red, green, and blue channels, which are then reassembled into full-color images.

6.3 Signal Conditioning and Transmission

After the electrical signals have been generated during transduction, broadcasting networks amplify, filter, convert analogue to digital, encode and compress, and modulate RF or digital networks (Pizzi, 2015; ITU-T, 2020).

7.0 Discussion

7.1 Quality Challenges

Despite accurate transduction processes, broadcast systems experience physical constraints:

- Noise: Electric noise and environmental noise are able to distort signals. Digital filtering, cable shielding and balanced audio lines can reduce noise, but cannot remove it completely (Rossing & Fletcher, 2004).

- Latency: Digital systems cause delays in the processing of signals. Latency also impacts audio-video synchronization in live apps and must be properly designed (Zhao and Wang, 2019).

- Bandwidth Constraints: The bandwidth required by high-resolution video and multichannel audio is very high. Advanced compression algorithms are a tradeoff between quality and broadcast ability (ITU-T, 2020).

7.2 Educational Implications

Scientific knowledge about the transduction process can provide communication students with technical literacy, informed decisions and interdisciplinary thinking, which connects physics, engineering and media studies.

8.0 Conclusion

Transduction: Conversion of sound waves and light into electrical signals: The basis of broadcast technology. This article fills the gap between fundamental science and practical uses of devices and their deployment in broadcasting by discussing physical principles, system challenges, and mechanisms that devices perform. In the communication students, transduction is important in enhancing technical knowledge and aiding better interaction with broadcast technologies.

References

Alberts, B., Johnson, A., Lewis, J., Morgan, D., Raff, M., Roberts, K., & Walter, P. (2015). Molecular biology of the cell (6th ed.). Garland Science.

Baker, R. (2010). Ribbon microphone technology: A review. Acoustical Review Quarterly, 5(2), 78–89.

Everest, F. A., & Pohlmann, K. C. (2015). Master handbook of acoustics (6th ed.). McGraw-Hill Education.

Griffiths, D. J. (2017). Introduction to electrodynamics (4th ed.). Cambridge University Press.

Hecht, E. (2016). Optics (5th ed.). Pearson.

Holst, G. C., & Lomheim, T. S. (2011). CMOS/CCD sensors and camera systems. SPIE Press.

ITU-T. (2020). Video coding standards (H.264, HEVC). International Telecommunication Union.

Janesick, J. (2001). Scientific charge-coupled devices. SPIE Press.

Kinsler, L. E., Frey, A. R., Coppens, A. B., & Sanders, J. V. (1999). Fundamentals of acoustics (4th ed.). Wiley.

Pizzi, S. (2015). Signal processing pipelines for digital broadcasting. Journal of Media Engineering, 12(3), 45–60.

Rossing, T. D., & Fletcher, N. H. (2004). Principles of vibration and sound (2nd ed.). Springer.

Smith, J. (2018). Principles of broadcast technology. Technical Press.

Tipler, P. A., & Mosca, G. (2007). Physics for scientists and engineers (6th ed.). W. H. Freeman.

Wolf, S., & Tauber, R. N. (2000). Silicon photodetectors: Physics and technology. Springer.

Zhao, L., & Wang, H. (2019). Sensor science and image quality in digital broadcast cameras. International Journal of Broadcast Engineering, 7(1), 22–40.